At Syndis, we have always believed that to defend effectively, you must understand how to attack. That philosophy hasn’t changed with the rise of AI it has intensified.

Artificial Intelligence is transforming cybersecurity faster than any previous technology wave. But despite the hype, AI is neither a silver bullet nor an autonomous hacker replacing human expertise. It is a force multiplier, and like any powerful tool, it must be guided, constrained, and governed. Here is how we approach AI at Syndis.

AI on the Defensive Side

On the defensive front, AI allows us to enhance detection, accelerate response, and uncover patterns that would otherwise remain buried in massive datasets.

In practical terms, we use AI to:

-

Correlate large volumes of log data

-

Identify anomalies across complex infrastructures

-

Assist SOC analysts with prioritization

-

Detect subtle attack chains across time

-

Improve threat intelligence analysis

However, AI does not “run the SOC.”

Our 24/7 monitoring is still led by experienced security analysts. AI helps reduce noise and increase signal, but it is the human analyst who understands business context, attacker motivation, and operational impact.

AI suggests. Humans decide.

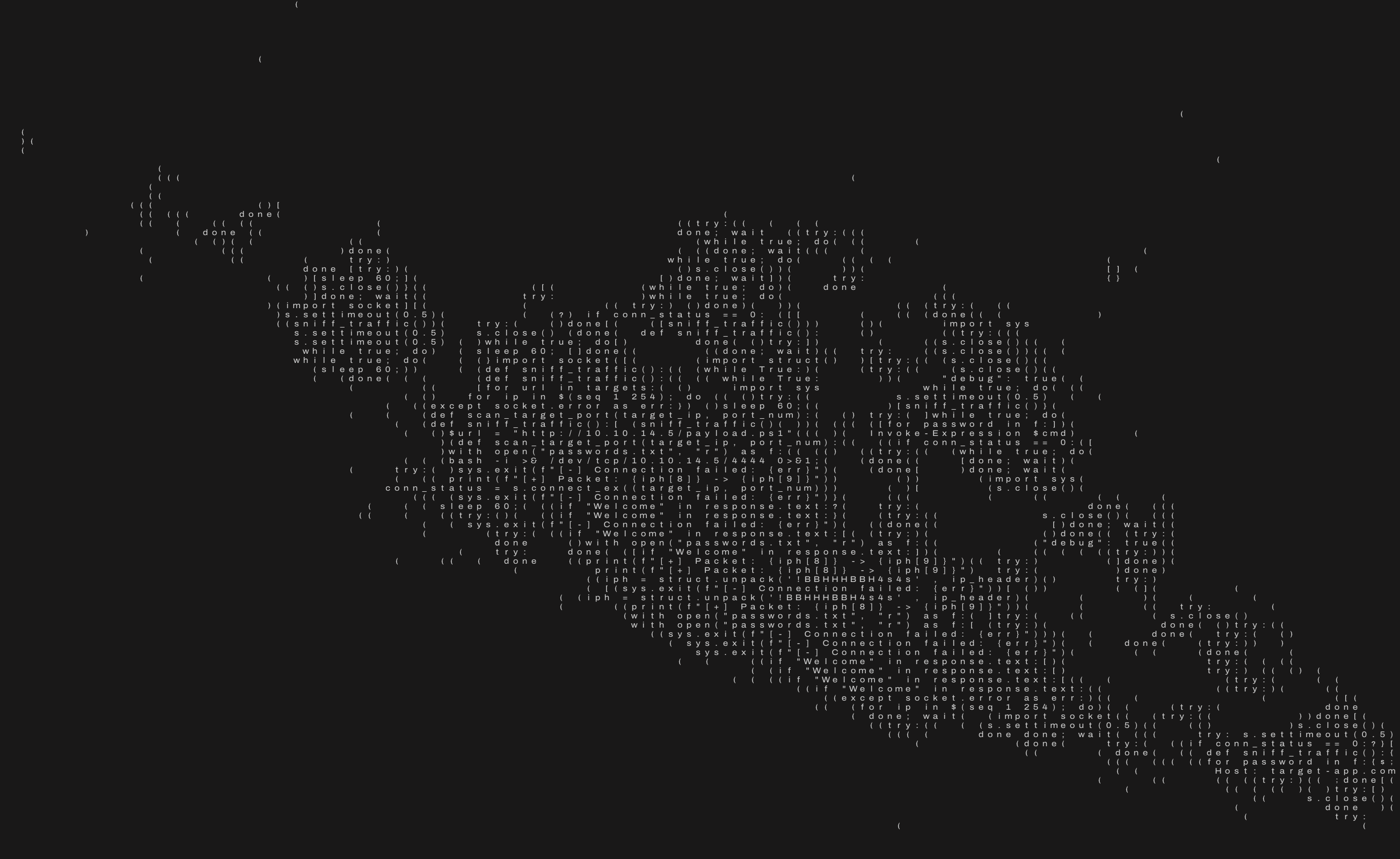

AI on the Offensive (Hacking) Side

Where things get even more interesting is on the offensive side. AI dramatically enhances penetration testing and red teaming by:

- Assisting in code review and vulnerability discovery

- Generating exploit hypotheses

- Automating repetitive reconnaissance tasks

- Helping analyze large attack surfaces

- Supporting phishing simulation content creation

But here is the critical point:

An AI hacker is only as good as the human hacker guiding it. Left unguided, an LLM produces generic output. Guided by a seasoned offensive security professional, it becomes a highly capable assistant that accelerates discovery and expands creative attack thinking.

An experienced hacker knows:

- What to ask

- How to validate output

- When the model is hallucinating

- Where real-world exploitation differs from theoretical vulnerability

- How attackers chain weaknesses in practice

AI does not think like an attacker. It predicts like a language model. The human hacker provides strategy, intuition, and adversarial creativity.

Why AI Must Be Sovereign

As AI becomes integrated into cybersecurity workflows, data governance becomes a national security issue.

At Syndis, we believe strongly in:

- Keeping sensitive data within Iceland

- Ensuring customer data is not shared across borders

- Maintaining full control over AI processing environments

- Deploying models in controlled, sovereign infrastructure

When AI tools analyze logs, code, or incident data, that information may include:

- Business-critical intellectual property

- Sensitive customer records

- Security weaknesses

- National infrastructure details

This data cannot be exposed to foreign jurisdictions or third-party training pipelines.

AI used in cybersecurity must operate under strict data residency and privacy controls. For us, that means Icelandic data, Icelandic governance, and full transparency. Sovereignty is not marketing. It is security.

The Real Risk: Overtrusting AI

The greatest danger is not malicious AI. The greatest danger is blind trust in AI.

Over-automation leads to:

- Missed context

- False positives accepted as truth

- Hallucinated vulnerabilities

- Poor remediation advice

- Security theater instead of security improvement

Cybersecurity remains a human discipline. AI accelerates, humans judge, humans decide, humans take responsibility.

The Future: Human-led, AI-enhanced security

We see AI as a permanent and powerful part of cybersecurity, both defensive and offensive. But we do not see it replacing professionals.

Instead, we see: Human expertise × AI acceleration = Better security outcomes.

At Syndis, our mission remains the same:

- Think like attackers

- Defend like engineers

- Operate with integrity

- Protect with sovereignty

AI strengthens that mission, but only under human control and within secure national boundaries.

Because in cybersecurity, responsibility and trust cannot be automated.